Has OpenAI Sora just launched the era of generative video?

Just a few weeks ago, I wrote that we’re probably still a long way from being able to create a movie from a natural language prompt.

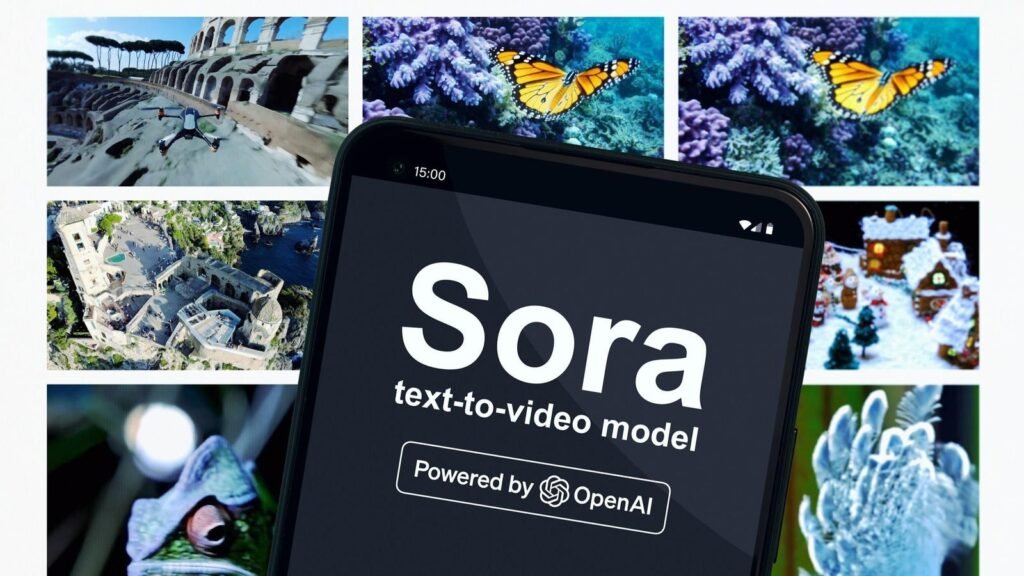

Now it looks like it might happen a lot sooner than I thought. OpenAI – creator of ChatGPT, the chatbot that launched the current generative AI craze – has just announced its own text-to-video conversion model, Sora.

To say the results stunned the AI community is an understatement. While we can’t use it for ourselves yet, the videos show near-photorealistic footage of a woman walking through a Gold Rush-era American town and city, generated from simple text prompts.

According to people I’ve talked to, that puts them two or three years ahead of where they were supposed to be when it comes to generative video. This is just another sign that the AI revolution is going to unfold at a much faster pace than many predict.

But generative video – while technically astonishing – creates ethical and societal challenges that go beyond those posed by the automated creation of text, images and sounds.

So let’s take a look at what it is, what it does, and perhaps most importantly, what it means for a world in which it will inevitably become increasingly difficult to tell the difference between the real and the digital.

So what is Sora?

Basically, Sora is to video what ChatGPT is to writing, and Dall-E 3 is to image generation. You type in what you want to see and it appears, in full motion, before your eyes.

None of the videos released so far have sound, but given the advances in AI sound and music generation, we can only assume that will happen soon.

AI generative video creators aren’t entirely new. I described a number of them that have appeared over the last year or so in the article I linked to at the start of this article. But most of the time, even though they generate text, overlays and effects, they do not produce real video animation. However, there are some exceptions, such as Track.

At this early stage, as impressive as it is, it’s not going to give us the next Toy Story from a prompt. But the potential is virtually limitless. Cinematographers can use it to visualize concepts and scenes or generate special effects. Teachers can create immersive historical reenactments and makers can use them to create prototypes and demonstrations.

At the moment, Sora can generate videos up to one minute long. And it’s more than just generating images (if we are to consider that simple now): creating a set of consecutive images to give the impression of movement; it is able to track the positioning of objects so that they move realistically and consistently with other objects, moving in front of or behind them, for example.

It can even perform complex operations such as “remembering” objects as they leave the camera so that they are accurately recreated when they come back into view.

This is of course not perfect and OpenAI admits that it will generate inconsistencies, such as objects not following the laws of physics or causality.

But from what we’ve seen, it’s an amazing technology that gives a tantalizing glimpse of what we’ll soon be able to do!

How it works?

Like Dall-E and other image generators, Sora is essentially a diffusion model, meaning that it creates images from random “noise” and gradually derandomizes them, transforming them into an image that matches invites them.

Over thousands or tens of thousands of steps, the images that make up the video become more defined.

What really makes it special is the ability to understand how the objects – people or anything else – in the setting would realistically interact with everything else. This could mean that water makes objects wet when passing through it or that a ball falls and moves on the ground realistically as it falls.

Just as ChatGPT understands words from their context and learns how they fit together with other words to communicate meaning, Sora understands how things act and behave in real-world contexts. OpenAI hasn’t given details about the data it’s being trained on, but it will likely be many, many hours of real-world video footage from which it can learn how objects, people, animals, and objects landscapes move and interact.

In addition to generating entirely new footage, it can continue existing video and recreate existing footage from new angles.

Is the world ready for generative video on demand?

Sora offers amazing possibilities. But allowing everyone to create realistic videos of whatever they want clearly won’t be without danger.

Scams and phishing attacks could become more sophisticated, for example by using deepfake videos to make fraudulent activities appear more legitimate or plausible. We’ve seen this before with AI voiceovers overlaid on celebrity footage to make it seem like they’re giving support.

It will inevitably become easier to create non-consensual videos that bear convincing resemblances to real people, which could be used to cause harm or for blackmail purposes.

I am sure we will also see it used to try to subvert democratic processes and spread fake news and disinformation, with the aim of undermining trust in politicians, governments or institutions.

OpenAI tells us that it has built protections into its algorithms to prevent many of these uses and is also developing its own tools to help identify harmful content. But as we’ve seen with ChatGPT, it’s highly likely that workarounds will be found, or copied products will emerge without protections in place.

Addressing these issues will require a concerted effort involving education, legislation, and the adoption of robust frameworks around responsible and ethical use of AI. Unfortunately, as has been the case with any transformative technology, from mechanization to automobiles and computing, it seems inevitable that damage will be done.

But the genie is now out of the bottle, meaning it’s up to responsible AI users and advocates to ensure society effectively manages these risks while also enabling its transformative potential to be realized.