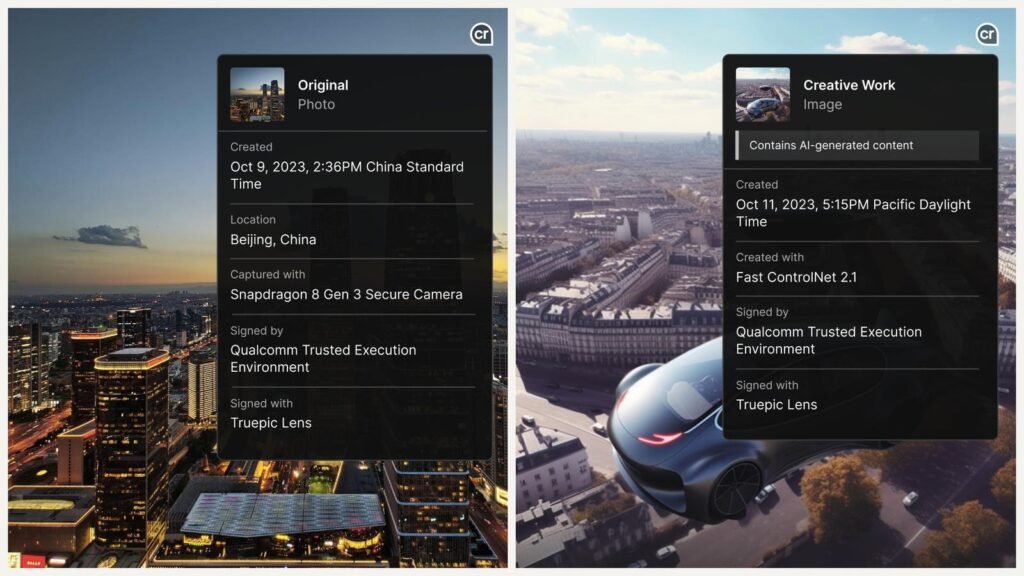

The new technology will allow smartphones to insert an image’s provenance data – such as time, location and the camera app used to create the image – at the hardware level, which could help people to identify real and fake images.

Truepic and Qualcomm

Chip giant Qualcomm and camera maker Leica have announced new technology programmed into their latest hardware to classify images as real or synthetic.

With AI-generated content spreading across the internet, businesses and individuals are grappling with ways to differentiate what’s real and what’s not. Digital watermarks can be useful here, but they are easily removed and AI detection tools are not always precise.

Today, several companies are beginning to deploy technology that would allow devices such as smartphones to insert unalterable cryptographic provenance data – information about how, when and where a piece of content originated. – in the images they create. This metadata, which would be difficult to fake because it is stored at the hardware level, is designed to make it easier for users to confirm whether something is real or AI-generated.

According to Judd Heape, vice president of camera at Qualcomm, this is the most “foolproof”, scalable and secure way to differentiate between real and fake images. That’s why Qualcomm announced this week that smartphones like Samsung, Xiaomi, OnePlus and Motorola that use its latest chipset, the Snapdragon 8 Gen 3 mobile platform, can integrate so-called “user credentials”. content” in an image as soon as it is created.

Similarly, German camera maker Leica announced this week that its new camera will digitally stamp each photo with similar identifying information: the photographer’s name, the time and location the photo was taken.

Both announcements are part of a larger industry-wide effort called the Coalition for Content Provenance and Authenticity (C2PA), an alliance between Adobe, Arm, Intel, Microsoft and Truepic aimed at developing global technical standards for certify the originality and history of multimedia content. Andrew Jenks, president of C2PA, said that while allowing hardware to insert metadata into images is not a perfect solution for identifying AI-generated content, it is more secure than watermarking, which is ” fragile “.

“As long as the file remains whole, the metadata is there. If you start editing the file, the metadata may be deleted and deleted. But it’s kind of the best we have right now,” Jenks said. “The question is what approaches do we need to bring together to get a relatively robust response to misinformation and misinformation. »

Qualcomm’s new chipset will use technology developed by Truepic, a startup partner of C2PA whose tools are used by banks and insurers to verify content. The technology uses cryptography to encode an image’s metadata, such as time, location and camera application, and bind it to each pixel. If the image was made using an AI model, the technology similarly encodes the model and prompt used to generate it. As the file circulates across the Internet and is edited or modified using AI or other technology, the changes are added to the metadata in the form of a digitally signed “complaint”, the same way a document is digitally signed. If the image is edited on a machine that does not conform to C2PA’s content credentials, the edit will still be included in the metadata but as an “unsigned” or “unknown” edit.

By embedding real images with metadata proving their origin, we hope that it will be easier for people who see these images circulating on the Internet to believe that they are real – or immediately know that they are fake.

Several image creation apps like Adobe’s generative AI tool Firefly and Bing’s image maker already label images with content identifying information, but they can be bare or lost when exporting the file. But Truepic’s technology creates metadata that will be stored in the most secure part of Qualcomm’s chip, where critical data like credit card information and facial recognition information is also kept, so it won’t cannot be falsified, Heape said.

Truepic CEO Jeffrey McGregor said the startup has focused on proving what’s real rather than detecting what’s fake – what he calls a more “proactive” and “pre-emptive” approach. – because detection techniques that attempt to identify what is fake result in an endless “” cat-and-mouse game. Indeed, AI tools are advancing faster than detection tactics, which rely on discrepancies in AI-generated content. Newer, more powerful versions of AI models could create artificial images that are more immune to technological attempts at detection.

“In the long term, there will be a lot more investment in the generative side of artificial intelligence and the quality will quickly outpace the ability of detectors to detect accurately,” he said.

McGregor believes that using smartphone chips to ensure that images contain information about their origin will be large-scale, he said. But there is a downside to implementing this method: Smartphone manufacturers and app creators must choose to use it. Qualcomm’s Heape said convincing them to do so was a priority.

“We are lowering the barrier to entry because it will run directly on Qualcomm hardware. So we can activate them immediately,” he said.

Another challenge: some applications may not support this new type of metadata. Qualcomm’s Heape said he hopes that eventually all device hardware and third-party apps will support C2PA’s content credentials. “I want to live in a world where all smartphones, whether Qualcomm or otherwise, adopt the same standards,” he said.