These new types of networks are revolutionizing the way we think about AI

Economic impact of AI and disruptive artificial intelligence technology that is significantly … [+]

In many ways, we are heading into a very exciting era of innovation: artificial intelligence and machine learning are worlds more advanced than they were just a few years ago. (This really should be obvious to almost everyone, but it sets the stage.)

One of the easiest examples to see is the convergence of new tools like ChatGPT, Sora, and whatever else is popping up today, often without much foreshadowing.

But those of us on the front lines also see something else: new forms of neural networks that do much more with a smaller, more compact construction…

In a previous article I talked about liquid neural networks and closed-form continuous-time models.

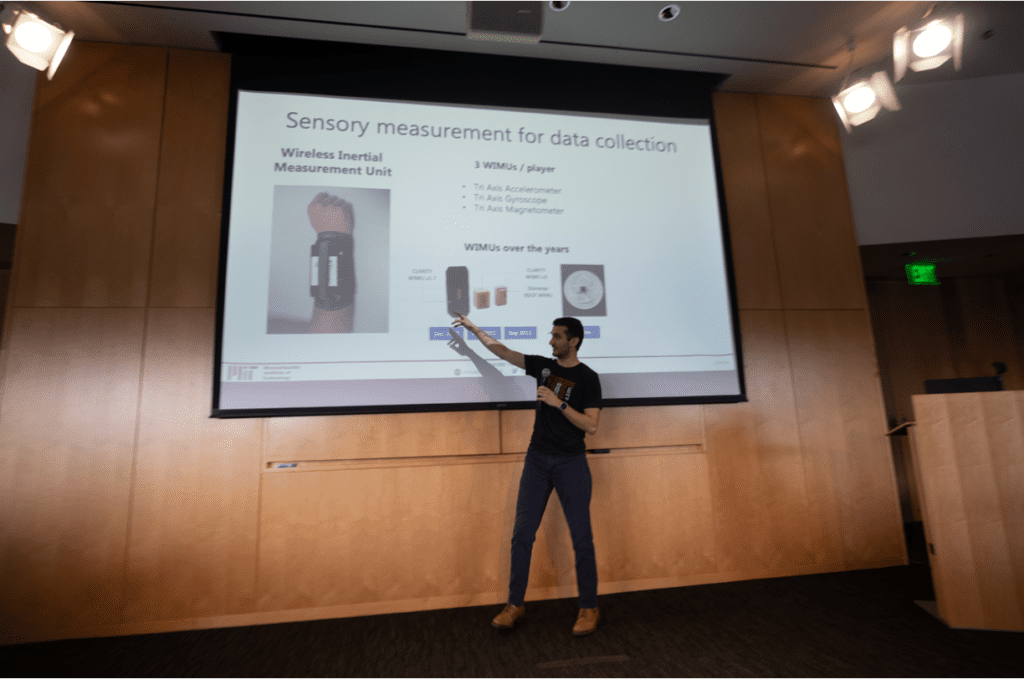

Alexander Amini presents in an MIT class

I wanted to revisit this because we got some interesting ideas from MIT AI scientist Alex Amini during a recent MIT class we run every week. We had some interesting thoughts about what really goes on behind the construction of these systems. Amini is also a co-founder of a company called LiquidAI, a pioneer of the systems mentioned above.

To illustrate how he initially approached AI, Amini began with two fundamental examples of projects he was involved in, not now, but in the past.

The first is a fascinating look at his earlier life as he grew into a teenager – Amini recounts how he moved to Ireland at the age of 14 and became involved in two of his biggest hobbies. time: coding and tennis.

How did they get together?

Well, he took a complex system, an observation system you could say (I’m talking about watching the game) and then… he literally changed the game!

Amini explained how sensor systems of the time were typically located close to the field: they observed the action from a spectator’s seat.

But he thought it would be better, in some way, to put the cameras on the players. And that got him thinking more about how this type of time series information is captured; On the one hand, body anatomy represents a unique data set.

“Just the location of a joint can tell us a lot about the configuration of the rest of the body,” he said, “especially if you’re in a constrained environment, like playing a sport, right ? If… an arm moves a certain way, there is a very limited set of different joint angles that the rest of the body will follow.

He also touched on some broader use cases:

“What we were really creating was a system of personal refinement, for behaviors and movements… (the subject didn’t) necessarily need to be an athlete,” he said. “Later, I realized this much broader vision of the business, and then I expanded it into other areas, like rehabilitation, medical rehabilitation, where patients are learning to walk again.”

Alexander Amini presents in an MIT class

His other excellent example came from his doctoral work, much later, a few years ago, on the study of advances in neural networks – after, as he pointed out, he had acquired more of a sense of mathematics and engineering experience.

This other view concerns rule-based systems and the question of constraints.

“AI doesn’t work well with constraints in general,” he said.

Responding to a question from the audience, he explained how text, for example, is difficult to generate in visual form.

“We only found this solution after we found the problem,” he said, discussing ways to overcome these obstacles and access increasingly dynamic AI capabilities.

Once again, the solution is a game changer: it’s about considering new models and new ways of doing things.

As I mentioned previously, CfCs are small networks based on the study of the nervous systems of certain small organisms. They use models of artificial synapses to represent the transfer of data between neurons.

Amini also explained how people think of them as continuous processing nodes of a system, and how this allows them to build something much more compact and efficient, reducing the size of the neural network by perhaps a thousand neurons , until, say, 19…

Alexander Amini presents in an MIT class

To resolve some of the constraints mentioned earlier, he asked for a sort of “auditor” to sit on top of the model, observe it, and determine if it was making an error.

Alexander Amini presents in an MIT class

Alexander Amini presents in an MIT class

Alexander Amini presents in an MIT class

Alexander Amini presents in an MIT class

Alexander Amini presents in an MIT class

In some of the previous conferences we’ve had, where people were talking about the purpose of the neural network, they explained that it can process vision differently than in a traditional network.

These new liquid AI neurons have many practical applications – our MIT CSAIL Lab Director, Daniela Rus, can tell you all about their potential for autonomous vehicles, for example.

You can read more about this in future articles, but I think these forays into innovation are central to our idea of where AI is headed today.

(Full disclosure: I am an advisor for LiquidAI.)