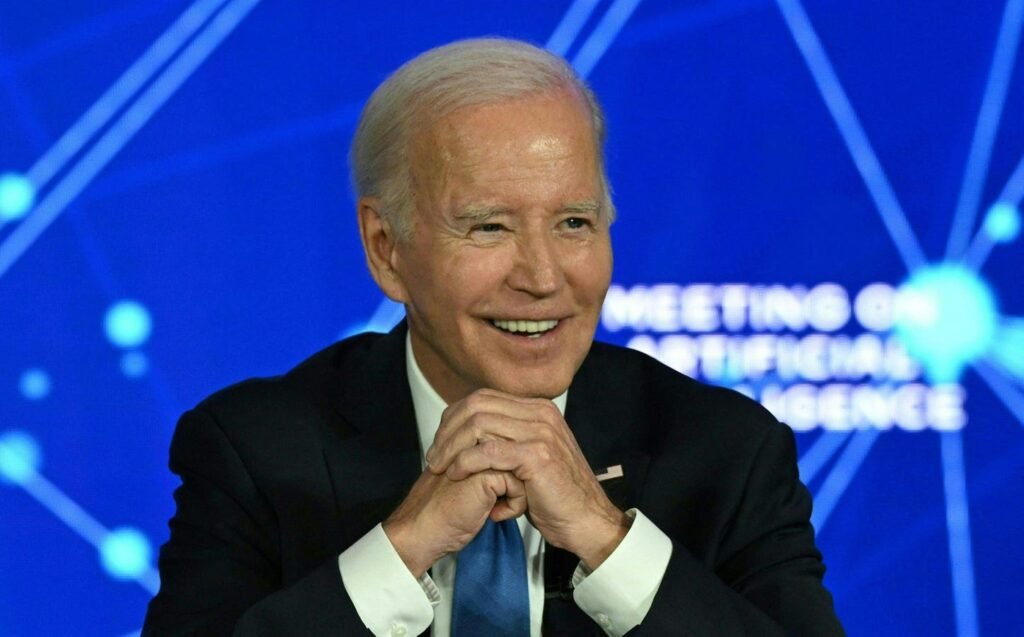

US President Joe Biden discusses artificial intelligence during an event in San Francisco in June 2023.

Photo by ANDREW CABALLERO-REYNOLDS/AFP via Getty Images

Tech companies largely applaud the new regulations, which seek to govern how the federal government will use AI and establish guidelines for companies building new models.

President Biden on Monday signed an ambitious new executive order aimed at placing guardrails on the use and development of AI, including provisions that will subject future large AI models like GPT-5 to OpenAI is monitored before publication.

Speaking to a room of lawmakers, industry executives and reporters at the White House on Monday, Biden described how the executive order was designed to mitigate the risks of AI while continuing to harness its benefits . “I am determined to do everything in my power to promote and demand responsible innovation“, ” Biden said, calling AI “the most important technology of our time.”

Under the executive order, any company building an AI model that could pose a national security risk must disclose it to the government and share data about what is being done to secure it, in accordance with federal standards that will be developed by the government. National Institute. standards and technology. The executive order to share preliminary testing data only applies to models that have not yet been released, which would include GPT-5, the highly anticipated successor to the popular GPT-4.

“Companies must notify the government about the large-scale AI systems they develop and share the results of rigorous independent testing to prove they pose no risk to national security or the safety of the American people,” it said. Biden at the event.

Ben Buchanan, special advisor to AI at the White House, said Forbes that currently used models, such as GPT-4 or Google’s Bard, are still subject to other elements of the decree, including “provisions on fairness, discrimination, consumer and worker protection”, he said. he declares. So far, however, he added, “to my knowledge, we haven’t seen a Cat GPT-4 compatible disaster.”

The order also aims to launch a recruiting drive for AI workers in the federal government with “dozens, if not hundreds” of AI-focused hires, Buchanan said. Additionally, he says it will reduce immigration barriers for international workers in the AI sector. This does not include increasing the cap on the number of H1B visas, Buchanan said, but he noted that there will be more emphasis on overall facilitation of the visa process for people working on “critical emerging technologies.” .

The order also establishes the creation of guidelines and standards for the government’s use of AI. Addressing concerns that AI could be used to discriminate against citizens, target critical infrastructure or be used in wars, the executive order will also require large AI models and programs to be evaluated by federal agencies before being deployed . Federal agencies – from the Department of Defense to the Department of Justice – will also be required to produce studies describing how they plan to integrate AI into their functions. Certain provisions relating to security issues are expected to come into force within the next 90 days.

“I am determined to do everything in my power to promote and demand responsible innovation. »

The executive order is the Biden administration’s broadest attempt yet to create workable safeguards for the development of artificial intelligence while cementing the United States as a leader in AI policy . Since taking office amid promises to reign in big tech, the Biden administration has suffered failures in its attempts to enforce antitrust regulations and failed to address the privacy concerns that have long plagued the technology. The order explicitly calls on Congress to pass bipartisan data privacy legislation, recognizing that AI increases incentives for invasive data collection.

The arrival of the wildly popular ChatGPT late last year clearly highlighted the promise and potential dangers of AI, and the US government has been rushing to introduce safeguards ever since – an effort that has sparked a fierce debate on the balance between promoting innovation and protecting consumers.

“The reason we’re trying to develop such a nuanced but comprehensive approach here is because we also see huge benefits,” Buchanan said. Forbes. “And the president’s direction here is that we can mitigate the risks of this technology in order to harness the benefits.”

Leaders of AI startups have welcomed the government’s approach. “You can’t manage what you can’t measure, and with this order, the government has taken significant steps toward creating third-party measurement and monitoring of AI systems,” said Jack Clark, co-founder and policy manager at Anthropic. Forbes by email.

“Streamlining the immigration of skilled AI workers is perhaps the best thing to do in this space,” Manu Sharma, co-founder and CEO of AI startup Labelbox, said in an email. . “AI is still in its infancy, and we need the brightest minds to help the United States accelerate the pace of innovation. »

Some, however, have expressed concerns about how the order could help industry giants like Google and Microsoft. “It is very reassuring that the Biden administration has acted so quickly to prioritize responding to serious and ongoing AI risks, including those related to protecting cybersecurity, critical infrastructure, and security nationally,” said Aidan Gomez, co-founder and CEO of Cohere. “That said, we must remain cautious and avoid the government building a regulatory regime that reinforces the power of incumbents.”

The executive order has broad authority within the federal government to establish standards and guidelines for various agencies. For example, to combat AI-based fraud, such as “deep-fake” videos or AI voice-generated calls, it is asking the Commerce Department to develop guidelines allowing federal agencies use watermarking and content authentication tools to label AI-generated content.

But some measures would require the cooperation of these agencies before they can be fully implemented. The order, for example, calls on the Federal Trade Commission to strengthen its antitrust and consumer protection measures against AI companies, even though it does not have the power to direct the agency.

When it comes to enforcement, the order invokes the Defense Production Act to require companies to notify the government when they build large, fundamental designs that could threaten national security. Buchanan also cited how other regulations will be used to mitigate AI, such as anti-discrimination laws. “We have, I think, quite a bit of leverage to bring to bear here on a bunch of different issues,” Buchanan said. Forbes. “I think in some cases it’s fair to say we look at the big picture and we commission important studies, but in many cases we use the force of the law.”

Unlike previous technology eras, AI leaders are actively engaging with governments, rather than openly avoiding regulators. OpenAI CEO Sam Altman undertook a world tour convince world leaders of the importance of technology and put themselves in a position to shape its regulation. Google CEO Sundar Pichai was call for AI rules for years; in a 2020 opinion piece in the Financial Times, he wrote: “Now there is no doubt in my mind that artificial intelligence needs to be regulated. It’s too important not to. The only question is how to approach it.

In statements, Kent Walker, Google’s president of global affairs, and OpenAI spokesperson Elie Georges both praised the government for its focus on boosting AI’s potential. OpenAI and Google, along with other companies like Nvidia, have already signed up a series of voluntary commitments the Biden administration released earlier this year to ensure its models are safe and trustworthy, along with more than a dozen others. Many of these companies already have large red teams testing their models. Nvidia declined to comment on the decree.

Biden’s executive order comes as the European Union moves closer to introducing the world’s first AI laws, under the European AI Act, which would give the bloc the ability to ban or shut down AI services deemed harmful to society. Other countries are also moving to restrict the use of AI, including Australia, which is seeking to introduce laws to ban deepfake videos.

Later this week, Vice President Kamala Harris plans to represent the administration at a major AI summit in London, where she will outline the administration’s AI policies and call for greater working with U.S. allies and adversaries on regulating AI companies.

Senator Chuck Schumer is leading a campaign in Congress to introduce AI legislation. Earlier this month, the New York senator led an “AI Insight Forum” attended by venture capitalist Marc Andreessen and AI startup founders like Gomez de Cohere, according to at the Washington Post. But it remains unclear exactly what guardrails the senator hopes to introduce, or what the legislation would look like.

Although many in technology circles recognize the need for AI legislation, others openly oppose any rules that could curb the explosive growth of the AI industry. In a widely derided manifesto released this month, Andreessen wrote that stifling AI innovation was tantamount to “murder.”

Kenrick Cai and Richard Nieva contributed reporting.